OpenAI Announces Overhaul of Security Protocols to Enhance Collaboration with Canadian Authorities

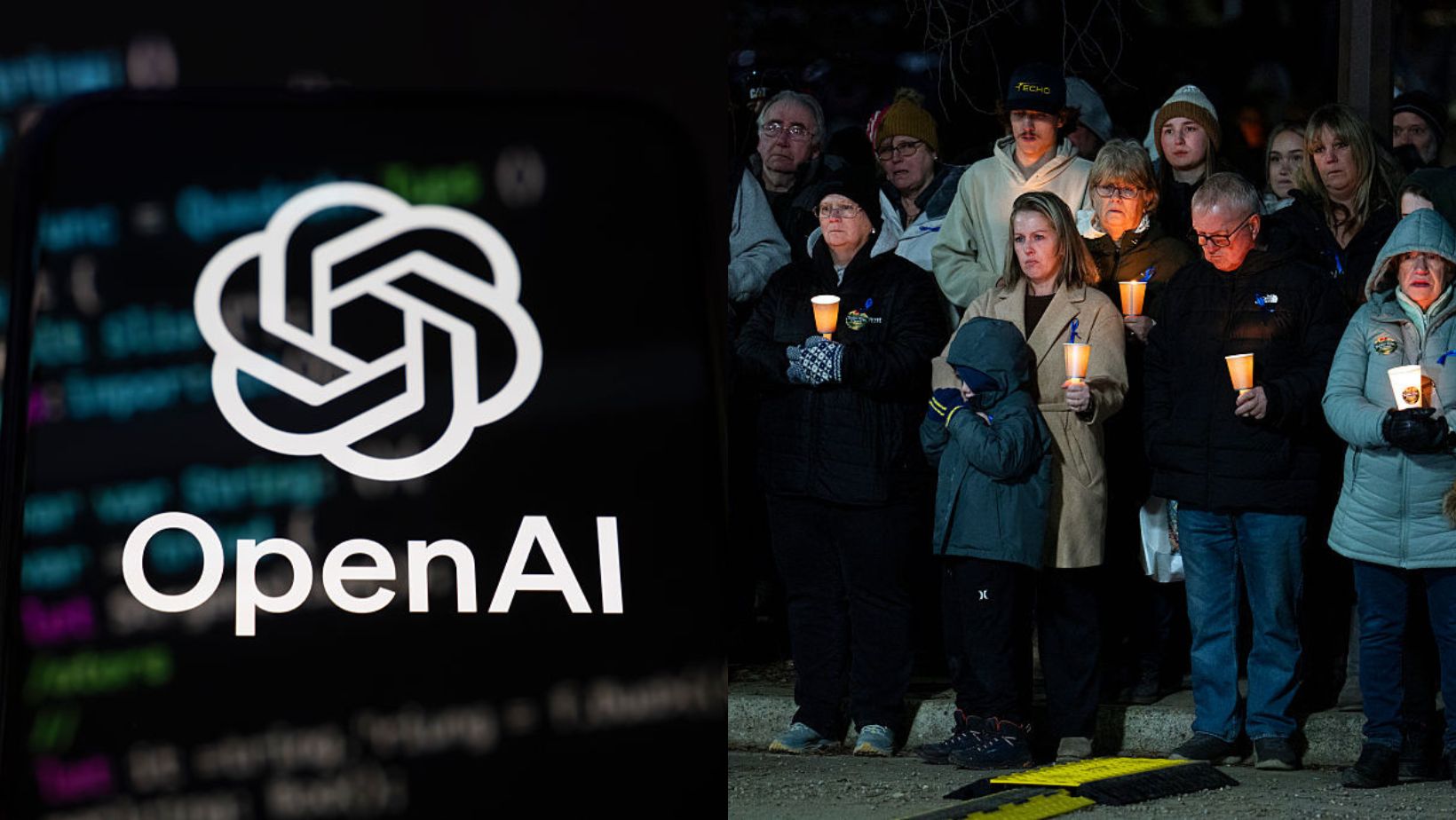

On Friday, OpenAI announced a significant overhaul of its security protocols aimed at improving collaboration with Canadian authorities. This move comes in response to recent tragedies, such as the Tumbler Ridge massacre, with the goal of preventing similar incidents in the future.

“At the request of ministers, we will establish direct points of contact with Canadian authorities to ensure that we quickly refer cases to them when we identify potential violence,” said Ann M. O’Leary, vice president of global policy at OpenAI, the parent company of ChatGPT.

This initiative marks the first step in a broader effort to enhance the safety and accountability of AI systems. The company has committed to strengthening its referral processes by incorporating insights from mental health and behavioral experts. Additionally, support resources directed to individuals who appear to be in distress will be more effectively targeted to their local communities.

Redesigning Security Protocols

OpenAI’s decision to redesign its security protocols is part of a larger commitment to improving the safety of its AI technologies. According to O’Leary, these measures are only the beginning of a long-term strategy to address concerns raised by Canadian officials and the public.

In the coming months, OpenAI plans to engage with Ottawa, provincial governments, industry partners, and local stakeholders to ensure that collective efforts meet the needs of Canadians. This collaborative approach aims to refine safety models and policies continuously.

The AI Minister’s Response

Evan Solomon, Canada’s Minister for Artificial Intelligence and Innovation, expressed his desire to understand how digital platforms respond to threats of extremism. He emphasized the importance of transparency in how platforms handle credible warning signs of violence.

Solomon is scheduled to meet with OpenAI CEO Sam Altman next week. Earlier this week, ministers in Carney’s government criticized ChatGPT for its inadequate security measures, which were revealed following the Tumbler Ridge massacre. The meeting with OpenAI representatives was part of an ongoing effort to address these concerns.

The police investigation into the incident remains ongoing, and details of the case have not been disclosed publicly. “We discussed how imminent and credible risks are identified, how cases move from automated detection to human review, and how reports are handled, particularly when young people may be involved,” Solomon stated in a release on X. “We did not discuss the details of the case, as the police investigation is still ongoing.”

Violent Scenarios Shared on ChatGPT

This development followed revelations from The Wall Street Journal that Jesse Van Rootselaar, the perpetrator of the Tumbler Ridge shooting, had shared violent intentions with the chatbot months before carrying out the attack. OpenAI only reported this information to the police after the fact.

Van Rootselaar opened fire on February 10 at a high school in Tumbler Ridge, British Columbia, killing eight people. He had created a second account to continue using ChatGPT despite the initial suspension.

Following the violent scenarios shared over several days, OpenAI decided to suspend the account without notifying Canadian authorities. In a letter sent to the Canadian government on Thursday, the company justified its decision by stating it had “identified a credible or imminent plan that met [their] threshold for referring the matter to law enforcement.”

However, OpenAI reported on Friday that the shooter had circumvented their ban by creating a second account. The company claims it only discovered this after the RCMP announced Jesse Van Rootselaar’s name.

Calls for Stricter AI Regulation

On Tuesday, the Canadian government indicated its openness to stricter AI regulations if companies fail to improve their security protocols. This stance reflects growing concerns about the role of AI in enabling harmful behavior and the need for greater oversight.

As the debate over AI safety continues, OpenAI’s latest actions signal a shift toward more proactive engagement with regulatory bodies and a commitment to addressing the challenges posed by advanced AI systems.

Created by humans, assisted by AI.